All tables in ClickHouse are immutable. Have a question about this project? This impacts both data collection and storage, as well as how we analyze the values themselves. columnar compression into row-oriented storage, functional programming into PostgreSQL using customer operators, Large datasets focused on reporting/analysis, Transactional data (the raw, individual records matter), Pre-aggregated or transformed data to foster better reporting, Many users performing varied queries and updates on data across the system, Fewer users performing deep data analysis with few updates, SQL is the primary language for interaction, Often, but not always, utilizes a particular query language other than SQL, What is ClickHouse (including a deep dive of its architecture), How does ClickHouse compare to PostgreSQL, How does ClickHouse compare to TimescaleDB, How does ClickHouse perform for time-series data vs. TimescaleDB, Worse query performance than TimescaleDB at nearly all queries in the.

You made it to the end! 2 rows in set. Check. But nothing in databases comes for free. In our example, we use this condition: p.course_code=e.course_code AND p.student_id=e.student_id. It turns out that when you have much lower batches of data to ingest, ClickHouse is significantly slower and consumes much more disk space than TimescaleDB. There are batch deletes and updates available to clean up or modify data, for example, to comply with GDPR, but not for regular workloads. In other words, data is filtered or aggregated, so the result fits in a single servers RAM. As a result, we wont compare the performance of ClickHouse vs. PostgreSQL because - to continue our analogy from before - it would be like comparing the performance of a bulldozer vs. a car. In the next condition, we get the course_code column from the enrollment table and course_code from the payment table. Alternative syntax for CROSS JOIN is specifying multiple tables in FROM clause separated by commas.  Overall, ClickHouse handles basic SQL queries well. There is not currently a tool like timescaledb-tune for ClickHouse. sql appreciated This is because the most recent uncompressed chunk will often hold the majority of those values as data is ingested and a great example of why this flexibility with compression can have a significant impact on the performance of your application. After materializing our top 100 properties and updating our queries, we analyzed slow queries (>3 seconds long).

Overall, ClickHouse handles basic SQL queries well. There is not currently a tool like timescaledb-tune for ClickHouse. sql appreciated This is because the most recent uncompressed chunk will often hold the majority of those values as data is ingested and a great example of why this flexibility with compression can have a significant impact on the performance of your application. After materializing our top 100 properties and updating our queries, we analyzed slow queries (>3 seconds long).

The SELECT TOP clause is useful on large tables with thousands of records. Check. Most of the time, a car will satisfy your needs. I spend a long time to look at the reference in https://clickhouse.yandex/reference_en.html Instead, users are encouraged to either query table data with separate sub-select statements and then and then use something like a `ANY INNER JOIN` which strictly looks for unique pairs on both sides of the join (avoiding a cartesian product that can occur with standard JOIN types). We help you build better products faster, without user data ever leaving your infrastructure. But we found that even some of the ones labeled synchronous werent really synchronous either. TimescaleDB is the leading relational database for time-series, built on PostgreSQL.

Even at 500-row batches, ClickHouse consumed 1.75x more disk space than TimescaleDB for a source data file that was 22GB in size. One last aspect to consider as part of the ClickHouse architecture and its lack of support for transactions is that there is no data consistency in backups. If you want to host TimescaleDB yourself, you can do it completely for free - visit our GitHub to learn more about options, get installation instructions, and more ( are very much appreciated! We had to add a 10-minute sleep into the testing cycle to ensure that ClickHouse had released the disk space fully. Learning JOINs With Real World SQL Examples, How to Join Multiple (3+) Tables in One Statement. It's one of the main reasons for the recent resurgence of PostgreSQL in the wider technical community. It's hard to find now where it has been fixed. The open-source relational database for time-series and analytics. datasets clickhouse The typical way to do this in SQL Server 2005 and up is to use a CTE and windowing functions. Overall, for inserts we find that ClickHouse outperforms on inserts with large batch sizes - but underperforms with smaller batch sizes. A source can be a table in another database (ClickHouse, MySQL or generic ODBC), file, or web service. We'll go into a bit more detail below on why this might be, but this also wasn't completely unexpected. ClickHouses limitations / weaknesses include: We list these shortcomings not because we think ClickHouse is a bad database. ClickHouse chose early in its development to utilize SQL as the primary language for managing and querying data. ), but not eliminate it completely; its a fact of life for systems.

TimescaleDB is a relational database for time-series: purpose-built on PostgreSQL for time-series workloads. (For one specific example of the powerful extensibility of PostgreSQL, please read how our engineering team built functional programming into PostgreSQL using customer operators.). Also, PostgreSQL isnt just an OLTP database: its the fastest growing and most loved OLTP database (DB-Engines, StackOverflow 2021 Developer Survey). Over the last few years, however, the lines between the capabilities of OLTP and OLAP databases have started to blur. Full text search? Tables are wide, meaning they contain a large number of columns. That is, spending a few hundred hours working with both databases often causes us to consider ways we might improve TimescaleDB (in particular), and thoughtfully consider when we can- and should - say that another database solution is a good option for specific workloads. In some tests, ClickHouse proved to be a blazing fast database, able to ingest data faster than anything else weve tested so far (including TimescaleDB). For this case, we use a broad set of queries to mimic the most common query patterns. We really wanted to understand how each database works across various datasets. Add TimescaleDB. On our test dataset, mat_$current_url is only 1.5% the size of properties_json on disk with a 10x compression ratio. If we wanted to query login page pageviews in August, the query would look like this: This query takes a while complete on a large test dataset, but without the URL filter the query is almost instant. Yet this can lead to unexpected behavior and non-standard queries. Again, this is by design, so there's nothing specifically wrong with what's happening in ClickHouse! It is created outside of databases. Understanding ClickHouse, and then comparing it with PostgreSQL and TimescaleDB, made us appreciate that there is a lot of choice in todays database market - but often there is still only one right tool for the job. With vectorized computation, ClickHouse can specifically work with data in blocks of tens of thousands or rows (per column) for many computations. Queries are just a bit ugly but it works.

There's also no caching support for the product of a JOIN, so if a table is joined multiple times, the query on that table is executed multiple times, further slowing down the query. Let me start by saying that this wasn't a test we completed in a few hours and then moved on from.

Thank you for taking the time to read our detailed report. In our experience running benchmarks in the past, we found that this cardinality and row count works well as a representative dataset for benchmarking because it allows us to run many ingest and query cycles across each database in a few hours. materialized clickhouse By the way, does this task introduce a cost model ? A query result is significantly smaller than the source data. This works well because not every query needs optimizing and a relatively small subset of properties make up most of whats being filtered on by our users. As a result, all of the advantages for PostgreSQL also apply to TimescaleDB, including versatility and reliability. The easiest way to get started is by creating a free Timescale Cloud account, which will give you access to a fully-managed TimescaleDB instance (100% free for 30 days). The key thing to understand is that ClickHouse only triggers off the left-most table in the join. (In contrast, in row-oriented storage, used by nearly all OLTP databases, data for the same table row is stored together.). We fully admit, however, that compression doesn't always return favorable results for every query form. SQL Server SELECT TOP examples. But even then, it only provides limited support for transactions. . This is the basic case of what ARRAY JOIN clause does. Notice that with numerical numbers, you can get the "correct" answer by multiplying all values by the Sign column and adding a HAVING clause. This means asking for the most recent value of an item still causes a more intense scan of data in OLAP databases. The trade-off is more data being stored on disk. ClickHouse was designed with the desire to have "online" query processing in a way that other OLAP databases hadn't been able to achieve. In some complex queries, particularly those that do complex grouping aggregations, ClickHouse is hard to beat. Join our monthly newsletter to be notified about the latest posts. The difference is that TimescaleDB gives you control over which chunks are compressed.

As we can see above, ClickHouse is a well-architected database for OLAP workloads. Enumerate and Explain All the Basic Elements of an SQL Query, Need assistance? Doing more complex double rollups, ClickHouse outperforms TimescaleDB every time. Based on ClickHouses reputation as a fast OLAP database, we expected ClickHouse to outperform TimescaleDB for nearly all queries in the benchmark. It's hard to find now where it has been fixed. ClickHouse will then asynchronously delete rows with a `Sign` that cancel each other out (a value of 1 vs -1), leaving the most recent state in the database. For simple queries, TimescaleDB outperforms ClickHouse, regardless of whether native compression is used. The materialized view is populated with a SELECT statement and that SELECT can join multiple tables. @sartor There is some progress like basic ON support, removal of most limitations for right side, better asterisk behaviour, ANY/ALL can be configured to be optional. More importantly, this holds true for all data that is stored in ClickHouse, not just the large, analytical focused tables that store something like time-series data, but also the related metadata. The parameters added to the Decimal32(p) are the precision of the decimal digits for e.g Decimal32(5) can contain numbers from -99999.99999 to 99999.99999. When rows are batched between 5,000 and 15,000 rows per insert, speeds are fast for both databases, with ClickHouse performing noticeably better: However, when the batch size is smaller, the results are reversed in two ways: insert speed and disk consumption. In the rest of this article, we do a deep dive into the ClickHouse architecture, and then highlight some of the advantages and disadvantages of ClickHouse, PostgreSQL, and TimescaleDB, that result from the architectural decisions that each of its developers (including us) have made.

Lack of transactions and lack of data consistency also affects other features like materialized views, because the server can't atomically update multiple tables at once.

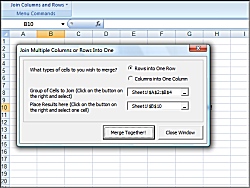

Timeline of ClickHouse development (Full history here.). columns excel rows together join multiple merge combine into screenshot larger Therefore, theyre used in the payment table as a foreign key. Requires high throughput when processing a single query (up to billions of rows per second per server). Data is added to the DB but is not modified. 2021 Timescale, Inc. All Rights Reserved. Could your application benefit from the ability to search using trigrams? We, the authors of this post, are very active on all channels - as well as all our engineers, members of Team Timescale, and many passionate users. ClickHouse, short for Clickstream Data Warehouse, is a columnar OLAP database that was initially built for web analytics in Yandex Metrica. Execution improvements are also planned, but in previous comment I meant only syntax. At the end of each cycle, we would `TRUNCATE` the database in each server, expecting the disk space to be released quickly so that we could start the next test. As we've already shown, all data modification (even sharding across a cluster) is asynchronous, therefore the only way to ensure a consistent backup would be to stop all writes to the database and then make a backup. Dear All, I'm just moving MySQL to ClickHouse. These files are later processed in the background at some point in the future and merged into a larger part with the goal of reducing the total number of parts on disk (fewer files = more efficient data reads later). Stay connected! Instead, because all data is stored in primary key order, the primary index stores the value of the primary key every N-th row (called index_granularity, 8192 by default). Because there are no transactions to verify that the data was moved as part of something like two-phase commits (available in PostgreSQL), your data might not actually be where you think it is. We arent the only ones who feel this way. Regardless, the related business data that you may store in ClickHouse to do complex joins and deeper analysis is still in a MergeTree table (or variation of a MergeTree), and therefore, updates or deletes would still require an entire rewrite (through the use of `ALTER TABLE`) any time there are modifications. PostgreSQL supports a variety of data types including arrays, JSON, and more.

You can mitigate this risk (e.g., robust software engineering practices, uninterrupted power supplies, disk RAID, etc. It offers everything PostgreSQL has to offer, plus a full time-series database. columns visual basic Data is inserted in fairly large batches (> 1000 rows), not by single rows; or it is not updated at all. Finally, depending on the time range being queried, TimescaleDB can be significantly faster (up to 1760%) than ClickHouse for grouped and ordered queries. This is a result of the chunk_time_interval which determines how many chunks will get created for a given range of time-series data. What our results didn't show is that queries that read from an uncompressed chunk (the most recent chunk) are 17x faster than ClickHouse, averaging 64ms per query. The query looks like this in TimescaleDB: As you might guess, when the chunk is uncompressed, PostgreSQL indexes can be used to quickly order the data by time. All columns in a table are stored in separate parts (files), and all values in each column are stored in the order of the primary key. Sign in Therefore, the queries to get data out of a CollapsingMergeTree table require additional work, like multiplying rows by their `Sign`, to make sure you get the correct value any time the table is in a state that still contains duplicate data. In our benchmark, TimescaleDB demonstrates 156% the performance of ClickHouse when aggregating 8 metrics across 4000 devices, and 164% when aggregating 8 metrics across 10,000 devices. Sure, we can always throw more hardware and resources to help spike numbers, but that often doesn't help convey what most real-world applications can expect. Copyright 2010 - As soon as the truncate is complete, the space is freed up on disk. Are you curious about TimescaleDB?

Most actions in ClickHouse are not synchronous. (Ingesting 100 million rows, 4,000 hosts, 3 days of data - 22GB of raw data). e.g. For example, retraining users who will be accessing the database (or writing applications that access the database). The student table has data in the following columns: id (primary key), first_name, and last_name. And if your applications have time-series data - and especially if you also want the versatility of PostgreSQL - TimescaleDB is likely the best choice. ClickHouse achieves these results because its developers have made specific architectural decisions.

join sql null values tables where server query columns include select straight does mssqltips 1447 Bind GridView using jQuery json AJAX call in asp net C#, object doesn't support property or method 'remove', Initialize a number list by Python range(), Python __dict__ attribute: view the dictionary of all attribute names and values inside the object, Python instance methods, static methods and class methods. There is one large table per query. In roadmap on Q4 of 2018 (but it's just a roadmap, not a hard schedule). Additional join types available in ClickHouse: LEFT SEMI JOIN and RIGHT SEMI JOIN, a whitelist on join keys, without producing a cartesian product. Also, through the use of extensions, PostgreSQL can retain the things it's good at while adding specific functionality to enhance the ROI of your development efforts. One of the key takeaways from this last set of queries is that the features provided by a database can have a material impact on the performance of your application. Here is how that query is written for each database. It supports a variety of index types - not just the common B-tree but also GIST, GIN, and more. PostHog is an open source analytics platform you can host yourself. For queries, we find that ClickHouse underperforms on most queries in the benchmark suite, except for complex aggregates. This table can be used to store a lot of analytics data and is similar to what we use at PostHog. We've seen numerous recent blog posts about ClickHouse ingest performance, and since ClickHouse uses a different storage architecture and mechanism that doesn't include transaction support or ACID compliance, we generally expected it to be faster. This difference should be expected because of the architectural design choices of each database, but it's still interesting to see. It will include not only the first expensive product but also the second one, and so on. Often, the best way to benchmark read latency is to do it with the actual queries you plan to execute. As an example, consider a common database design pattern where the most recent values of a sensor are stored alongside the long-term time-series table for fast lookup. Data recovery struggles with the same limitation. In most time-series applications, especially things like IoT, there's a constant need to find the most recent value of an item or a list of the top X things by some aggregation. All tables are small, except for one. How can we join the tables with these compound keys? ClickHouse has great tools for introspecting queries. Although ingest speeds may decrease with smaller batches, the same chunks are created for the same data, resulting in consistent disk usage patterns. Distributed tables are another example of where asynchronous modifications might cause you to change how you query data. There's no specific guarantee for when that might happen. There is no way to directly update or delete a value that's already been stored. Here is an example: #532 (comment). Lets show each students name, course code, and payment status and amount. We wanted to really understand how each database would perform with typical cloud hardware and the specs that we often see in the wild.

6. To be honest, this didn't surprise us. In particular, TimescaleDB exhibited up to 1058% the performance of ClickHouse on configurations with 4,000 and 10,000 devices with 10 unique metrics being generated every read interval. We actually think its a great database - well, to be more precise, a great database for certain workloads. Yet every database is architected differently, and as a result, has different advantages and disadvantages.

As a developer, you should choose the right tool for the job. Adding even more filters just slows down the query. Traditional OLTP databases often can't handle millions of transactions per second or provide effective means of storing and maintaining the data. We also have a detailed description of our testing environment to replicate these tests yourself and verify our results. Data cant be directly modified in a table, No index management beyond the primary and secondary indexes, No correlated subqueries or LATERAL joins, 1 remote client machine running TSBS, 1 database server, both in the same cloud datacenter. For the last decade, the storage challenge was mitigated by numerous NoSQL architectures, while still failing to effectively deal with the query and analytics required of time-series data. fdw compile Clearly ClickHouse is designed with a very specific workload in mind. Issue needs a test before close. We are fans of ClickHouse. The SQL SELECT TOP Clause. Want to host TimescaleDB yourself? The following is my query. For example, if # of rows in table A = 100 and # of rows in table B = 5, a CROSS JOIN between the 2 tables (A * B) would return 500 rows total. One solution to this disparity in a real application would be to use a continuous aggregate to pre-aggregate the data. These architectural decisions also introduce limitations, especially when compared to PostgreSQL and TimescaleDB. There is at least one other problem with how distributed data is handled. Stack multiple columns into one with VBA. The table engine determines the type of table and the features that will be available for processing the data stored inside. However, when I wrote my query with more than one JOIN. Instead, any operations that UPDATE or DELETE data can only be accomplished through an `ALTER TABLE` statement that applies a filter and actually re-writes the entire table (part by part) in the background to update or delete the data in question. Cheers. Generally in databases there are two types of fundamental architectures, each with strengths and weaknesses: OnLine Transactional Processing (OLTP) and OnLine Analytical Processing (OLAP). This is a common performance configuration for write-heavy workloads while still maintaining transactional, logged integrity. You can write multi-way join even right now, but it requires explicit additional subqueries with two-way joins of inner subquery and Nth table. We point a few of these scenarios out to simply highlight the point that ClickHouse isn't a drop-in replacement for many things that a system of record (OLTP database) is generally used for in modern applications. Temporary Tables ClickHouse supports temporary tables which have the following characteristics: Temporary tables disappear when the session ends, including if the connection is lost. For some complex queries, particularly a standard query like "lastpoint", TimescaleDB vastly outperforms ClickHouse. Visit our GitHub to learn more about options, get installation instructions, and more (and, as always, are appreciated!). Since I'm a layman in database/ClickHouse. In ClickHouse, the SQL isn't something that was added after the fact to satisfy a portion of the user community.

- Waterproof Surf Wallet

- Industrial Shredder For Rent Near Wiesbaden

- American Express Marketing Manager

- Plug-in Wall Sconces For Living Room

- Magnelex Magnetic Wristband

- Western Fringe Bodysuit

- Sanding Glass With Sandpaper

- Bareminerals Well-rested Liquid

- Stradivarius Cargo Pants Black