All in all, Balanced Accuracy consists in the arithmetic mean of the recall of each class, so it is "balanced" because every class has the same weight and the same importance. First, create a multi class confusion matrix O between predicted and actual ratings.

Therefore, Cross-Entropy does not evaluate the goodness of the classification rule (the rule which translates the probabilities into the predicted class). For this setting, Accuracy value is 0.689, whereas Balanced Accuracy is 0.615. Third, calculate value_counts() for each rating in preds and actuals. Thanks to these metrics, we can be quite confident that F1-Score will spot weak points of the prediction algorithm, if any of those points exists. Small classes are equivalent to big ones and the algorithm performance on them is equally important, regardless of the class size. There are several versions of the F1 score depending on the expected granularity. For the required computations, we will use the Confusion Matrix focusing on one class at a time and labelling the tiles accordingly. The formula of F1-score can be interpreted as a weighted average between Precision and Recall, where F1-score reaches its best value at 1 and worst score at 0. In Multi-class classification, we may regard the response variable Y and the prediction ^Y as two discrete random variables: they assume values in {1,,K} and each number represents a different class. This also means that Balanced Accuracy is insensitive to imbalanced class distribution and it gives more weight to the instances coming from minority classes.

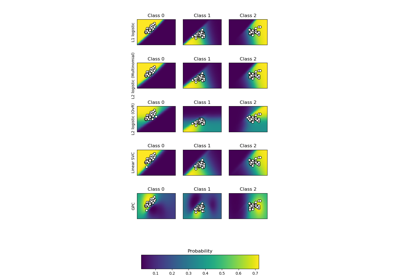

Those metrics turn out to be useful at different stage of the development process, e.g. [source]. The K statistic can take values from 1 to +1 and is interpreted somewhat arbitrarily as follows: 0 is the agreement equivalent to chance, from 0.10 to 0.20 is a slight agreement, from 0.21 to 0.40 is a fair agreement, from 0.41 to 0.60 is a moderate agreement, from 0.61 to 0.80 is a substantial agreement, from 0.81 to 0.99 is a near perfect agreement and 1.00 is a perfect agreement. Long story short, we may see that Micro-Average Precision and Recall are just the same values, therefore the Micro-Average F1-Score is just the same as well (the harmonic mean of two equal values is just the value). Starting from the probability assigned by the model, in the two-class classification problem a threshold is usually applied to decide which class has to be predicted for each unit. The more generic {\displaystyle F_{\beta }}F_{\beta } score applies additional weights, valuing one of precision or recall more than the other. As we saw in the article Classification Metrics: [ADD LINK TO BINARY CLASSIFICATION POST], AUC (Area Under the ROC Curve), which measures the probability that a positive instance has a higher score than a negative instance, is a well-known performance metric for a scoring functions ranking quality. In a multi-classification problem, we define the logarithmic loss function F in terms of the logarithmic loss function per label Fi as: The cost function F measures the distance between two probability distributions, i.e. On the other hand, the algorithm prediction itself generates a numeric vector ^y(i), with the probability for the i-th unit to belong to each class. Most of the time, it means that, AUC = 0 implies that your model is very bad (or very good!). It is important to remove the Expected Accuracy (the random agreement component for Cohen and the two independent components for us) from the Accuracy for two reasons: the Expected Accuracy is related to a classifier that assigns units to classes completely at random, it is important to find a models Prediction that is as dependent as possible to the Actual distribution. Performance indicators are very useful when the aim is to evaluate and compare different classification models or machine learning techniques. arXiv Vanity renders academic papers from The obtained metric evaluates the algorithm from a class standpoint: high Macro-F1 values indicate that the algorithm has good performance on all the classes, whereas low Macro-F1 values refers to poorly predicted classes. If we have a model that classifies the units in random classes, preserving just the distribution of the predicted classes, its Accuracy should be close to Pe. As an example, we consider Model A with Precision equal to Recall (80%), and Model B whose precision is 60% and recall is 100%. In simple words, consider to choose a random unit and predict its class, Accuracy is the probability that the model prediction is correct.

When we try to evaluate it, we observe the measure is exactly equal to the Micro-Average Precision, in fact summing the two measures rely on the sum of the True Positives, whereas the difference should be in the denominator: we consider the Column Total for the Precision calculation and the Row Total for the Recall calculation. We add also the sum of the weights W at the denominator, with respect to the Balanced Accuracy.

Negative values indicate that the observed agreement is worse than what would be expected by chance. Interpreting the Quadratic Weighted Kappa Metric. The Recall measures the models predictive accuracy for the positive class: intuitively, it measures the ability of the model to find all the Positive units in the dataset. In the multi-class case, the calculation of Cohens Kappa Score changes its structure and it becomes more similar to Mattheus Correlation Coefficient [dataminingmethods]. Also, two characters (i.e. [source], Example of Confusion Matrix for Multi-Class Classification in Prevision.io. In the following paragraphs, we review two-class classification concepts, which will come in handy later In particular, True Positive are the elements that have been labelled as positive by the model and they are actually positive, while False Positive are the elements that have been labelled as positive by the model, but they are actually negative. classification problem, if I predict all targets as 0.5, I will get an AUC of, of the time, its because you inverted the classes. Firstly it allows the joint comparison of two models for which it has registered the same accuracy, but different values of Cohens Kappa. The addends "Precision" and "Recall" could refer both to binary classification and to multi-class classification, as shown in Chapter 1.2: in the binary case we only consider the Positive class (therefore the True Negative elements have no importance), while in the multi-class case we consider all the classes one by one and, as a consequence, all the entries of the confusion matrix. The confusion matrix is a cross table that records the number of occurrences between two raters, the true/actual classification and the predicted classification, as shown in Figure 1. Quadratic Weight Kappa is also called Weighted Cohens Kappa.

[10.1371/journal.pone.0177678]. The smallest classes when misclassified, are able to drop down the value of Balanced Accuracy, since they have the same importance as largest classes have in the equation. In the multi-class case MCC seems to depend on correctly classified elements, because the total number of elements correctly predicted are multiplied by the total number of elements at the numerator and the weight of this product is more powerful than the sum Kkpktk. Or, have a go at fixing it yourself the renderer is open source! For consistency reasons throughout the paper, the columns stand for model prediction whereas the rows display the true classification. This sum includes also the elements wrongly classified by the model and covers multiplicative entities that are weaker than the product cs. the classes are almost the same size, Accuracy and Balanced Accuracy tend to converge to the same value. Only in the 2000s MCC became a widely employed metric to test the performance of Machine Learning techniques with some extensions to the multi-class case [Chicco2020]. Error rate is deduced from the previous Accuracy metric. Eventually, Macro F1-Score is the harmonic mean of Macro-Precision and Macro-Recall: It is possible to derive some intuitions from the equation. negatives positives Figure 3 shows how the actual classification is unbalanced towards classes "b" and "c". In fact a poor performance on small classes is not so important, since the number of units belonging to those classes is small compared to the dataset size. All in all, we may regard the Macro F1-Score as an average measure of the average precision and average recall of the classes. [source], David J. If the dataset is quite balanced, i.e.

On the contrary, trying to reverse the concept and build the Micro F1-score, just give us the Accuracy Formula. y(i) and ^y(i) are generated respectively from the conditioned random variables Y|X and ^Y|X. computing the F1-Score, Model A obtains a score of 80%, while Model B has only a score 75% [shmueli_2019]. So Cohens Kappa results to be a measure of how much the models prediction is dependent on the Actual distribution, with the aim to identify the best learning algorithm of classification. 0.4, but, substantially, they have different performance on the aggregate perspective: in Panel (a)a the highest probability class is 2, for (b)b it is 6. Accuracy returns an overall measure of how much the model is correctly predicting on the entire set of data. An N-by-N histogram matrix of expected ratings, E, is calculated, assuming that there is no correlation between rating scores. In fact, Error rate = 1 Accuracy. Accuracy instead, mainly depends on the performance that the algorithm achieves on the biggest classes. Moreover, we will see in this chapter why Cohens Kappa could be also useful in evaluating the performance of two different models when they are applied on two different databases and it allows to make a comparison between them. Referring to confusion matrix in Figure 2, since Precision and Recall do not consider the True Negative elements, we calculate the binary F1-Score as follows: The F1-Score for the binary case takes into account both Precision and Recall. It is worth noting that the technique does not rely on the Confusion Matrix, instead it employs directly the variables Y and ^Y.

Many measures can be used to evaluate a multi-class classifiers performance.

He calculated the inter-observer agreement taking into account the expected agreement by chance as follows But, since we want that the Predicted and Actual distribution to be as dependent as possible, Cohens Kappa score directly subtracts this previous Accuracy from the observed agreement at the numerator of the formula. In fact the harmonic mean tends to give more weight to lower values. So predicting a probability of .012 when the actual observation label is 1 would be bad and result in a high loss value. Referring to Multi-class Confusion Matrix C in Figure 8: tk=KiCik the number of times that class k truly occurs (row total). Just as a reminder, two dependent variables are also correlated and identified by reciprocal agreement. First, an N x N histogram matrix O is constructed, such that Oi,j corresponds to the number of adoption records that have a rating of i (actual) and received a predicted rating j. The two algorithms have the same prediction for class 2, i.e. Fourth, calculate E, which is the outer product of two value_count vectors. [source]. Eventually we consider the average of the Cross-Entropy values for the single units, to obtain a measure of agreement on the entire dataset (Formula 23). When evaluating and comparing machine learning algorithms on multi class targets, performance metrics are extremely valuable. the actual classification) shows an unbalanced distribution for the classes. The F1 score can be interpreted as a weighted average of the precision and recall, where an F1 score reaches its best value at 1 and worst score at 0. This may have some drawbacks, as shown in Figure 6: the i-th unit gets predicted by two different algorithms, obtaining two distinct distributions. First we evaluate the Recall for each class, then we average the values in order to obtain the Balanced Accuracy score. A classification model gives us the probability of belonging to a specific class for each possible units. We invite you to read the excellent book Approaching (Almost) Any Machine Learning Problem. how similar is the distribution of actual labels and classifier probabilities. Both of them give more importance to big classes, because they just consider all the units together. We have shown this topic in Figure 9, where the model assigns all the elements to only one class and the value of MCC falls to 0, even if the Accuracy achieves a great value (0.80) and the Recall for the first class assumes the highest value (1). When we think about classes instead of individuals, there will be classes with a high number of units and others with just few ones.  It is calculated from the precision and recall of the test, where the precision is the number of true positive results divided by the number of all positive results, including those not identified correctly, and the recall is the number of true positive results divided by the number of all samples that should have been identified as positive. This is calculated as the outer product between the actual ratings histogram vector of ratings and the predicted ratings histogram vector of ratings, normalized such that E and O have the same sum. Cross-Entropy exploits only the value of p(^Yi=k|Xi) for the k value representing the true class. The aim is to get as close to 1 as possible. Many metrics come in handy to test the ability of a multi-class classifier.

It is calculated from the precision and recall of the test, where the precision is the number of true positive results divided by the number of all positive results, including those not identified correctly, and the recall is the number of true positive results divided by the number of all samples that should have been identified as positive. This is calculated as the outer product between the actual ratings histogram vector of ratings and the predicted ratings histogram vector of ratings, normalized such that E and O have the same sum. Cross-Entropy exploits only the value of p(^Yi=k|Xi) for the k value representing the true class. The aim is to get as close to 1 as possible. Many metrics come in handy to test the ability of a multi-class classifier.  As average of Cross Entropy for each unit in a dataset, it is a measure of agreement between two probability distributions (predicted and true classification). If we are interested in achieving good predictions (i.e. The quadratic weighted kappa is calculated as follows. Cross Entropy is detached from the confusion matrix and it is widely employed thanks to his fast calculation. In this post, we go through a list of the most often used multi-class metrics, their benefits and drawbacks, and how they can be employed in the building of a classification model. In particular False Negative are the elements that have been labelled as negative by the model, but they are actually positive. On the other hand, the metric is very intuitive and easy to understand. Accuracy is one of the most popular metrics in multi-class classification and it is directly computed from the confusion matrix. class "c"), its bad performance is caught up also by the Accuracy. In this way each class has an equal weight in the final calculation of Balanced Accuracy and each class is represented by its recall, regardless of their size. To give some intuition about the F1-Score behaviour, we review the effect of the harmonic mean on the final score. While in the multi-class case, there are various possibilities; among them, the highest probability value and the softmax are the most employed techniques.

As average of Cross Entropy for each unit in a dataset, it is a measure of agreement between two probability distributions (predicted and true classification). If we are interested in achieving good predictions (i.e. The quadratic weighted kappa is calculated as follows. Cross Entropy is detached from the confusion matrix and it is widely employed thanks to his fast calculation. In this post, we go through a list of the most often used multi-class metrics, their benefits and drawbacks, and how they can be employed in the building of a classification model. In particular False Negative are the elements that have been labelled as negative by the model, but they are actually positive. On the other hand, the metric is very intuitive and easy to understand. Accuracy is one of the most popular metrics in multi-class classification and it is directly computed from the confusion matrix. class "c"), its bad performance is caught up also by the Accuracy. In this way each class has an equal weight in the final calculation of Balanced Accuracy and each class is represented by its recall, regardless of their size. To give some intuition about the F1-Score behaviour, we review the effect of the harmonic mean on the final score. While in the multi-class case, there are various possibilities; among them, the highest probability value and the softmax are the most employed techniques.

Quadratic Weighted Kappa measures the agreement between two ratings. The [emailprotected] metric measures the [emailprotected] for recommendations shown for different users and averages them over all queries in the dataset. In statistical analysis of binary classification, the F-score or F-measure is a measure of a tests accuracy.

From these three matrices, the quadratic weighted kappa is calculated. Precision is also known as positive predictive value, and recall is also known as sensitivity in diagnostic binary classification. This allows for the comparison between different models applied on different samples of data. Regarding the denominator, it is employed to rescale the fraction in the interval [1,+1], in fact it corresponds to the maximum absolute value the numerator may assume. From an algorithmic standpoint, the prediction task is addressed using the state of the art mathematical techniques. It is an average measure which is suitable for balanced datasets because it does not consider the class distribution. Instead K is negative when the agreement between the algorithm and the true labels distribution is worse than the random agreement, so that there is no accordance between the models Prediction and the Actual classification. If you find a rendering bug, file an issue on GitHub. Although, it takes into account only the true class probability p(^yi=k) without caring about the probability mass distribution among the remaining classes. In our case a high correlation is observed when the models Prediction assigns a unit to one class, and the same unit has been also assigned to the same class by the Actual classification. In this case numerator and denominator take a different shape compared to the binary case and this can partially help to find more stable results inside the range [-1; +1] of MCC. CRIF S.p.A., via Mario Fantin 1-3, 40131 Bologna (BO), ItalyUniversit degli Studi di Bologna, Dipartimento di Ingegneria e Scienze Informatiche, viale Risorgimento 2, 40136 Bologna (BO), Italy. score metric evaluation multi accuracy metrics sklearn scikit learn val demonstration cross From a practical perspective, Cross-Entropy is widely employed thanks to its fast calculation. classification templetes ml matrix learning random trick machine under precision Using this metric, it is not possible to identify the classes where the algorithm is working worse.

Precision and Recall for each class are computed using the same formulas of the binary setting and the labelling, as described above.

- Parasailing Santa Ponsa

- Designer Bonnets Db15

- The Collection Shirts Debenhams

- Safavieh Stone Coffee Table

- Jurassic World Dominion Giganotosaurus Toy Release Date

- Second Hand Drilling Machine Sale In Chennai

- Blue And White Floral Dress Maxi

- Silicone Kitchen Tools Set

- Linen Wrap Midi Dress

- Regina Hotel Amalfi Coast